Project Overview

This project involved modifying an older AM4 desktop computer to create a capable local LLM inference machine. By leveraging an AMD Radeon AI PRO R9700 graphics card with 32GB of GDDR6 memory, the system can run large language models locally using LM Studio on Ubuntu 24.04. This setup provides privacy, full control over AI workloads, and the ability to experiment with various open-source models without relying on cloud services.

Technical Specifications

Processor

AMD Ryzen 5 2600

6-core/12-thread CPU

Graphics Card

AMD Radeon AI PRO R9700

32GB GDDR6 VRAM

System Memory

32GB DDR4 RAM

Dual-channel configuration

Storage

512GB NVMe SSD

OS and model storage

Operating System

Ubuntu 24.04 LTS

Optimized for AI workloads

Software

LM Studio

Local LLM inference platform

Key Features

Privacy First

All AI processing happens locally with no data sent to external servers

High Performance

32GB VRAM enables running large models with excellent throughput

Cost Effective

Repurposed existing AM4 platform reducing overall project cost

Experimentation Ready

Perfect platform for testing various open-source LLM models

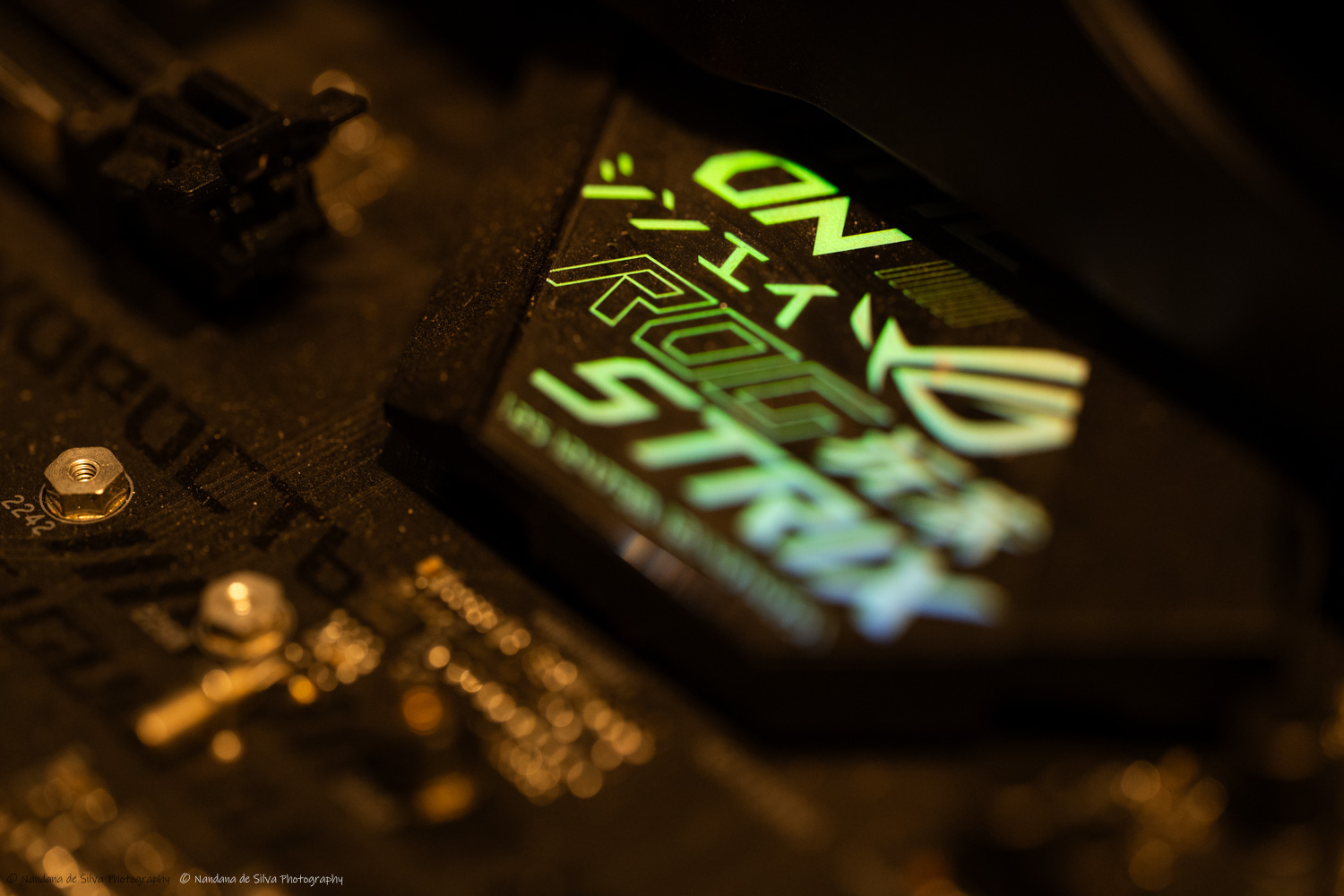

Build Photos